When academic output appears to rise in tandem with administrative rank, what is called into question is no longer individual capability, but the boundaries of the system itself.

By Xu Hengzhi

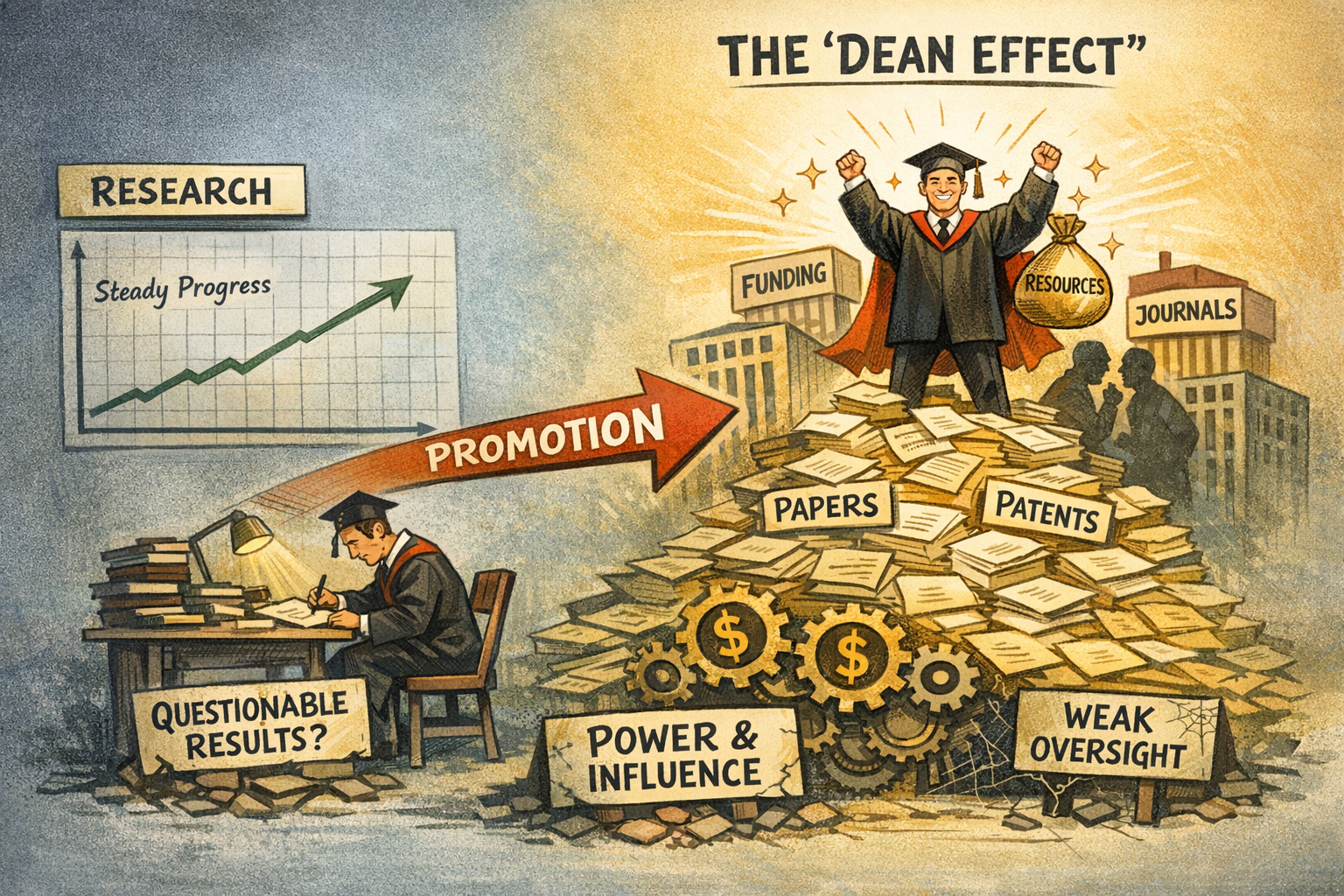

In recent years, the so-called “Dean Effect” has drawn increasing attention. In some cases, some dean-scholars experience a sharp and discontinuous surge in publications and patents after assuming deanships. These increases often lack continuity with prior research trajectories and are not necessarily accompanied by improvements in quality.

The issue, therefore, is not individual performance, but institutional logic. When academic output appears to “grow” with position, the problem shifts from scholarly capacity to potential blind spots in evaluation and oversight systems.

From Anomaly to Structural Signal

Under normal conditions, academic production follows path dependency: stable research agendas, relatively consistent output rhythms, and a general alignment between quantity and quality. The “Dean Effect,” however, shows a different pattern—rapid output expansion across multiple, sometimes unrelated fields.

Such changes are difficult to explain by increased productivity alone. They point instead to a redistribution of authorship and credit driven by non-academic factors. The key issue is not how much research is done, but how outputs are attributed. This makes the phenomenon a structural signal rather than an isolated anomaly.

Power Embedded in Knowledge Production

In the university system, a dean is not merely an academic figure but also a holder of critical resources—funding, projects, platforms, and evaluative authority. When these resources intersect with research processes, they may reshape how outputs are generated and assigned.

In collaborative research environments, authorship already contains some flexibility. Once power enters this space, it may lead to expanded authorship, symbolic affiliations, or strategic collaborations. More concerning is the emergence of implicit “resource–publication exchange” mechanisms, where access to resources and influence may facilitate publication opportunities through reciprocal arrangements with journals or networks.

In such cases, output growth may partially derive from positional authority rather than direct intellectual contribution.

Systemic Impacts on the Research Ecosystem

The implications extend beyond individual cases.

First, academic fairness is undermined. The principle that contribution should match authorship becomes weakened, eroding trust in evaluation systems.

Second, innovation incentives are distorted. When credit allocation shifts away from originality, researchers may gravitate toward dependency-based collaborations and risk-averse outputs, moving from problem-driven to relationship-driven research.

Third, evaluation systems are reshaped. High output linked to administrative rank reinforces quantity-based metrics, creating a feedback loop: more power leads to more output, which in turn legitimizes the metric itself, marginalizing quality and originality.

Finally, public perception of governance is affected. Repeated and unexplained output surges may foster the impression that academic results are influenced by power and that oversight mechanisms are ineffective. Over time, this can weaken confidence not only in academic governance but also in the fairness and credibility of evaluation and disciplinary systems, ultimately affecting perceptions of the integrity and effectiveness of governance more broadly.

The “Powerization” of Research

At a deeper level, the “Dean Effect” reflects a broader trend: the increasing embedding of knowledge production within organizational and power structures. Academic output is no longer determined solely by intellectual processes but is shaped by institutional environments.

This trend is not unique to one system—large-scale research everywhere depends on coordination and resources. The issue arises when such power lacks clear boundaries, shifting from enabling research to restructuring it.

Institutional Blind Spots

The core problem lies in the lack of systematic scrutiny of research outputs produced by individuals with dual academic and administrative roles.

In cadre evaluation, publications and patents are often treated as performance indicators, yet little attention is paid to their underlying production logic—whether outputs align with prior expertise, whether growth patterns are consistent with academic norms, or whether they correlate with resource allocation.

Meanwhile, disciplinary oversight tends to focus on visible issues such as funding use and project management, while paying limited attention to authorship attribution, implicit exchanges, or power-influenced credit distribution.

This creates a gray zone: outputs are incentivized in evaluation but insufficiently examined in oversight, allowing positional advantages to translate into academic output.

Re-establishing Boundaries

The solution lies not in restricting output, but in clarifying institutional boundaries.

First, research outputs should be treated as accountability indicators in cadre evaluation, with attention to their structure, origin, and consistency with scholarly background.

Second, disciplinary oversight should incorporate authorship and output attribution into risk assessment, focusing on patterns of potential misuse rather than isolated cases.

Third, data-driven evaluation mechanisms should be introduced to analyze the relationship between outputs and resource allocation, enabling systematic identification of anomalies.

Through coordinated evaluation and oversight, a basic principle can be reinforced: administrative position should not serve as a channel for automatic output growth, and power should not determine academic credit.

Conclusion

The significance of the “Dean Effect” lies not in numerical anomalies, but in what it reveals about the relationship between power and knowledge production. When this relationship lacks clear boundaries, institutional incentives and constraints become misaligned.

The issue, therefore, is not about individuals, but about systems. Strengthening the linkage between evaluation and disciplinary oversight is essential to safeguarding academic integrity and institutional fairness.